Image recognition and classification are what make many of the most exciting advances in artificial intelligence possible. But what are the most popular models for detecting and classifying images?

In today’s Development Notes, we sit down with Sergii Shvets, CEO of Bintime to dive into this topic.

What is the Problem of Image Classification?

Rapid development of electronic commerce poses new challenges to the cognitive and computing capabilities of the software. The ever-increasing volume of data and increasing requirements for its validation and organization require the automation of verification and classification processes, including images. Such tasks require cognitive abilities from software, which is impossible without the use of modern algorithms built based on deep learning.

How it all Started?

Deep learning algorithms take inspiration from the biological principles of building the brain apparatus, namely the structure of a neuron and the way neurons are organized into a network. The idea of a perceptron, an artificial neuron, was proposed by Frank Rosenblatt in 1957.

The model proposed by him copes well with linearly separable data. For more complex problems, the perceptron model is not well applicable, and a multilayer neural perceptron model was suggested. This, in turn, created the foundation for a modern deep learning approach, which can solve problems including computer vision and image classification. Actually, that happened in the 60th, but at that time it was held back by the computational power available. In 1990 a renaissance of deep learning happened due to the increasing of computational power of CPUs.

Nowadays many image classification algorithms have been suggested, and the question arises of their reference comparison for an objective assessment of their degree of effectiveness. As a solution, researchers from Princeton University proposed and currently support the ImageNet reference dataset.

As of now, it has 14 million images in 20,000 categories. Every year, the ILSVRC (ImageNet Large Scale Visual Recognition Challenge) is held — where researchers from all over the world compete in the accuracy of image classification using ImageNet benchmark data. This competition gave an extraordinary impetus to the rapid development of image recognition and classification algorithms.

This competition gave an extraordinary impetus to the development of image recognition and classification algorithms.

What is ILSVRC Performance Evaluation Methodology?

The ILSVRC uses several metrics to measure competition performance:

Top-1 or Top-1 Accuracy

This is the percentage of cases in which the proposed model provided a correct classification. Here, the accuracy of the classification should be understood as the coincidence of the reference class with the most probable class according to the prediction of the model being tested.

Top-5 or Top-5 Accuracy

A softer criterion corresponds to the percentage of finding the reference class among the five most likely ones proposed by the model. This is an important criterion because, in the case of complex images, there may be several cognitively valid labels.

Error matrix, or Confusion matrix

A matrix of dimensions NxN (N – the number of classes), which, accordingly, reflects the number of cases when the reference class i was classified by the model as class j.

Accordingly, the matrix is useful for visualizing how the model behaves and analyzing True Positive, True Negative, False Positive, False Negative parameters.

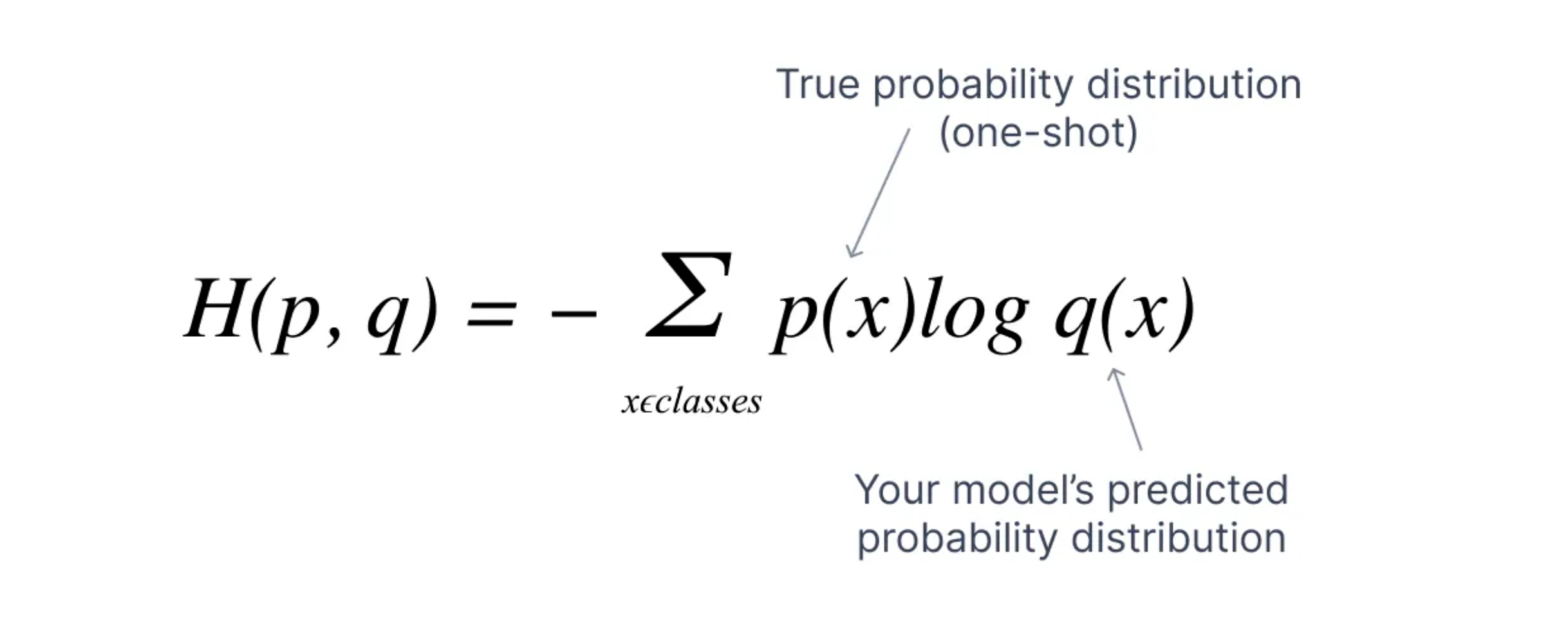

Cross-entropy loss

This metric is calculated according to the formula:

Where:

M — the number of classes

N — the number of samples

yo,c — an indicator (0|1) indicating whether the sample corresponds to class c

po,c — correspondingly predicted probability of sample o belonging to class c

This metric provides an averaged estimate of the model’s overall accuracy performance.

Assessment

For development, researchers use a validation dataset to fine-tune their model parameters. The test set is used during the final evaluation of the proposition.

What are the Current Results?

Thanks to ImageNet and ILSVRC, researchers from all over the world have been allowed to investigate the performance of their personalized models on large-scale test data. As a result, the proposed image classification models achieve extremely high efficiencies.

Current performance status of existing models according to ILSVRC

[Source: https://paperswithcode.com/sota/image-classification-on-imagenet]

Let’s explore the most popular models.

Some of the Modern Models Applicable to Image Classification

OmniVec — 92.4% Top-1 Accuracy

Offered by OpenAI, this framework is multimodal, meaning it works with different modalities (text, images, video, audio, etc.). A separate encoder is used for each data type, but the vector space and backbone network are shared, allowing the model to benefit from using different modalities for training. Training in different modalities is proposed to be conducted sequentially.

Accordingly, different task heads are used for different tasks. A transformer (Vision Transformer, ViT) or a convolutional network can be used as an encoder, or direct data could be used as well. Therefore, information received from different modalities is coordinated and integrated by a common intermediate layer.

CoCa — 91.0% Top-1 Accuracy

This approach was proposed by Google. It combines several paradigms (single-encoder, dual-encoder, and encoder-decoder) in one model, which is trained simultaneously using the method of contrast loss during generation captions (captioning / generative loss).

The encoder is represented by a transformer or a convolutional network. The decoder uses a transformer architecture that does not use cross-attention in the unimodal layers at the first stage. After that, it uses cross-attention to the outputs of the image encoder to learn multimodal image-text representations.

Model Soup BASIC-L — 90.98% Top-1 Accuracy

This technique consists of combining the prediction results of different models through certain weights, averaging, or weighted voting. Models that have proven themselves well within the necessary limits of applications are usually used. Accordingly, the computational cost using this approach can significantly exceed other single-model methods.

However, this approach provides high stability of the solution and costs can be justified in critical applications that require a high degree of reliability.

What are the Ways of Improvement?

Regardless of the narrowness of the problem of image classification, frameworks and models that use a multimodal approach to learning networks are the true leaders in solving classification problems.

This leads to an increase in the number of model parameters, and an increase in computational complexity, and requires an innovative approach to encoders. Therefore, it can be assumed that future performance improvements can be achieved by finding more productive methods for the aforementioned elements as well as by orchestrating existing architectures.

This definitely deserves further research and experimentation.

Sources

- ImageNet Large Scale Visual Recognition Challenge [https://arxiv.org/pdf/1409.0575]rel=””noopener noreferrer”

- OmniVec: Learning robust representations with cross-modal sharing Siddharth Srivastava, Gaurav

- Sharma [https://arxiv.org/pdf/2311.05709v1]rel=””noopener noreferrer”

- CoCa: Contrastive Captioners are Image-Text Foundation Models Jiahui Yu† Zirui Wang† [https://arxiv.org/pdf/2205.01917v2]rel=””noopener noreferrer”

- Mitchell Wortsman. Model soups: averaging weights of multiple fine-tuned models improves accuracy without increasing inference time [https://arxiv.org/pdf/2203.05482v3]rel=””noopener noreferrer”